Facebook for has been under fire over the spread of misinformation connected with Russian involvement in the 2016 U.S. presidential election. In April 2018, the idea for an independent oversight board was discussed when CEO Mark Zuckerberg testified before Congress. In November 2018, Zuckerberg officially announced his intention to form an oversight board for Facebook:

- As I've thought about these content issues, I've increasingly come to believe that Facebook should not make so many important decisions about free expression and safety on our own.

- In the next year, we're planning to create a new way for people to appeal content decisions to an independent body, whose decisions would be transparent and binding. The purpose of this body would be to uphold the principle of giving people a voice while also recognizing the reality of keeping people safe.

- I believe independence is important for a few reasons. First, it will prevent the concentration of too much decision-making within our teams. Second, it will create accountability and oversight. Third, it will provide assurance that these decisions are made in the best interests of our community and not for commercial reasons.

Thus, the purpose of the Board is to act like a Supreme Court, which would have final say on what speech and content would be allowed on the platform if there is a dispute. The Board will have the authority to overturn removal or moderation decisions made by Facebook. The Oversight Board has launched its own website that provides its charter and overview of the appeals process for content moderation decisions made by Facebook and Instagram. The Board explains its review: "Facebook has a set of values that guide its content policies and decisions. The board will review content enforcement decisions and determine whether they were consistent with Facebook’s content policies and values. For each decision, any prior board decisions will have precedential value and should be viewed as highly persuasive when the facts, applicable policies, or other factors are substantially similar. When reviewing decisions, the board will pay particular attention to the impact of removing content in light of human rights norms protecting free expression."

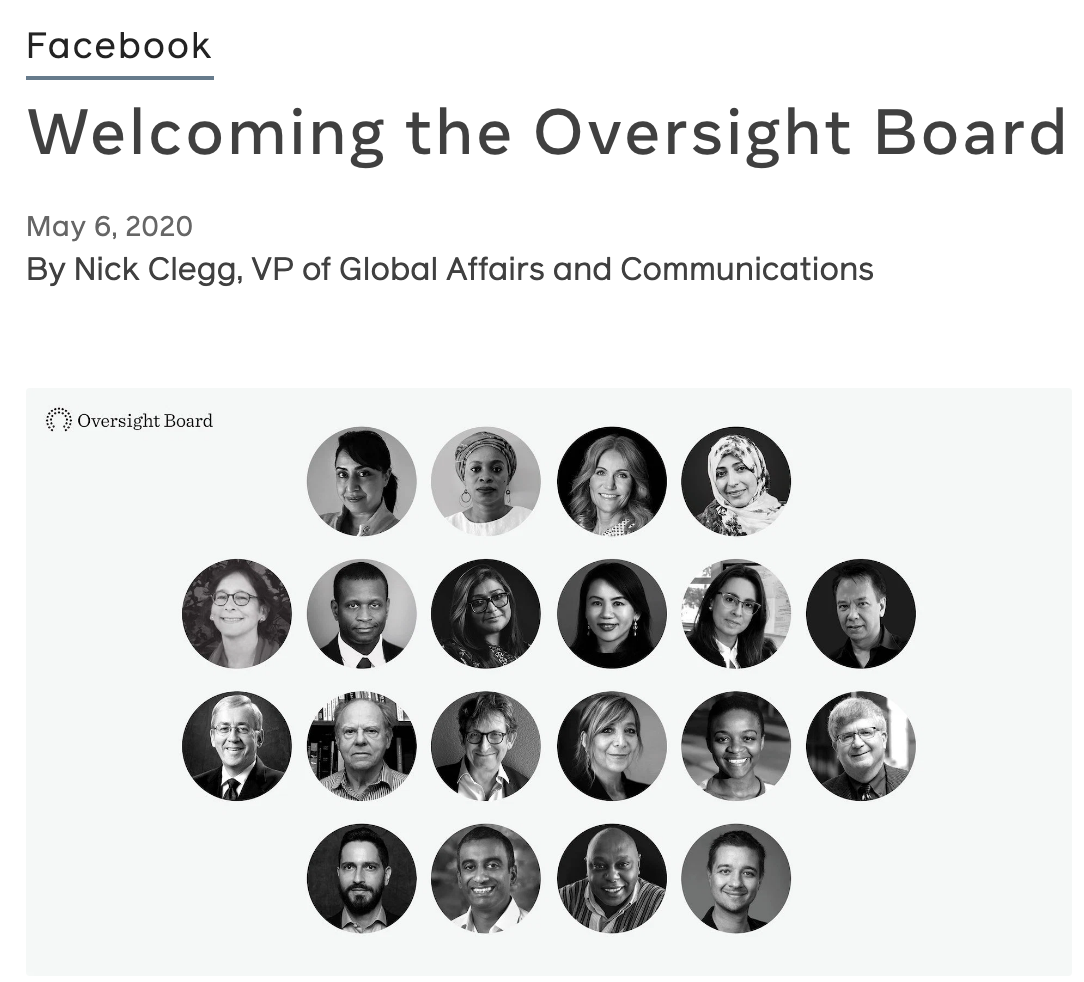

On May 6th, 2020, Facebook announced the first twenty of the potential forty members of the Facebook Oversight Board to great fanfare.

Despite the fanfare about its membership, the Board is not yet up and running. The Board announced that it will not be fully operational until “late fall,” meaning after the 2020 election. This timetable is disappointing given that the main impetus for creating the Oversight Board was to correct Facebook’s lack of preparedness for interference and misinformation in the 2016 election. However, if the Board will not be operational in time for the election cycle then it will be too late, according to Forbes.

Critics have also questioned the Board's decision to limit initiallly its review to only violations determined by Facebook and not also Facebook's decisions that certain content does not violate its community standards. The co-chairs of the Board explained in the New York Times: "In the initial phase users will be able to appeal to the board only in cases where Facebook has removed their content, but over the next months we will add the opportunity to review appeals from users who want Facebook to remove content." Facebook's decisions not to moderate potentially violating content, such as hate speech, are just as controversial as its decisions to moderate content. And Facebook has been roundly criticized by civil rights group for allowing racist hate speech to fester on Facebook.

Another concern is that the Board's review process may be simply too slow to address misinformation on Facebook. Posts that are reported by users will still be dealt by Facebook's content moderation staff internally, and then users can submit an appeal or Facebook may refer a problematic case to the Oversight Board, which will have up to 90 days to make a ruling, according to the Board's bylaws. Critics are concerned that the 90-day period purpose will render the Board unable to address content moderation in a timely fashion as misinformation or violations remain online during that time. In 2020, when news can go viral in hours, if not minutes, a 90-day decision making process does not seem adequate to address misinformation on Facebook. And, for the 2020 election, the Oversight Board is already too late.

-written by Sean Liu